Slash Cloud Costs and Streamline Data Pipelines: A Game-Changing Open-Source Tool

Ray.io changes the basic premise of how data pipelines and distributed systems work

Is your organization drowning in data but thirsty for savings? What if I told you there's a tool that can slash technical hurdles, streamline your data pipelines, and boost responsiveness – all while putting more money back in your pocket?

This article will focus on a tool called Ray.io, to highlight why I believe it is a game changer within its category.

We'll go over:

What sets Ray apart

What are ETL tools

What are ML pipeline tools

Problems in current ML pipeline flow

Ray and how it solves these problems

Origins of Ray

Links to youtube videos for further learning

Shaul here. This is the first article in my blog, aimed at engineers that want to make an impact on their organization, and any technical people looking to improve their craft. I plan to make the articles short (<5 minutes to read) and practical. I'm excited to start it, and I hope you'll enjoy the read :)

Let's start!

Understanding distributed systems, data pipelines, their relationships, current tooling and how it’s going to change

In this article, I aim to share my recent experience with Ray and articulate why I believe it stands out from other data pipeline and/or machine learning pipeline tools. These are the tools that run the heavy data workflows almost any organization employ, to treat truly large datasets at great speeds. Ray represents a significant departure from existing solutions, and I will highlight its unique features and benefits.

What Sets Ray Apart?

Ray is a tool designed for distributed machine learning (ML) processing. It is often compared to tools like Kubeflow and Airflow, which manage sequential task execution (as seen with ETL tools like SSIS and Azure Data Factory). However, Ray’s approach and capabilities are markedly different from these tools.

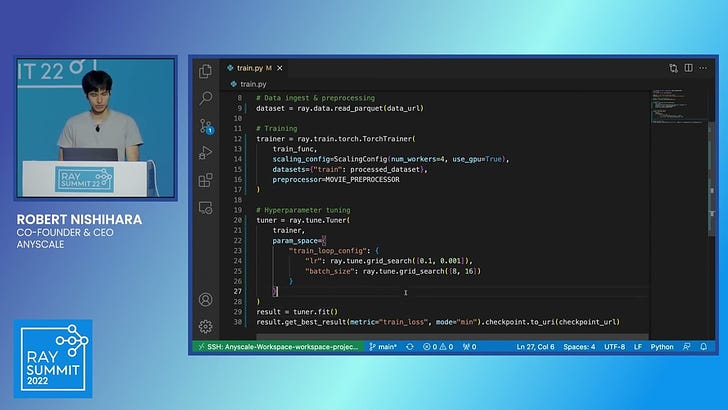

My understanding of Ray deepened after watching a demo by Ray’s founder, Robert Nishihara, which vividly showcased its distinct advantages (see links at the end of this post).

But first, a bit of introduction - ETL Tools

ETL (Extract, Transform, Load) tools are essential for data integration, enabling the extraction of data from various sources, transforming it into a suitable format, and loading it into a target database or data warehouse. These tools automate complex tasks, ensuring data consistency, accuracy, and accessibility. ETL tools are vital in business intelligence and data management strategies and can be broadly categorized into:

Simple drag-and-drop no-code tools: Examples include SSIS (SQL Server Integration Services) and Azure Data Factory.

Programmatic tools: Apache Airflow, which uses code to create pipelines.

Understanding ML Pipeline Tools

In the last several years, a new category has emerged in software infrastructure. Machine Learning Engineering / Pipeline management tools. But this is not just machine learning, these can be used for managing data and its flow into any kind of algorithm. We’ll call them “ML pipeline tools” for brevity. ML pipeline tools streamline and automate the development, deployment, and management of machine learning models. They facilitate the entire ML workflow, from data preprocessing to model training, evaluation, deployment, and monitoring. By orchestrating these stages, ML pipeline tools ensure reproducibility, scalability, and efficiency, allowing data scientists to focus on model fine-tuning and insights rather than repetitive tasks.

Examples include Kubeflow, Red Hat Data Science (based on Kubeflow), and SageMaker.

Typical Machine Learning Pipeline Flow

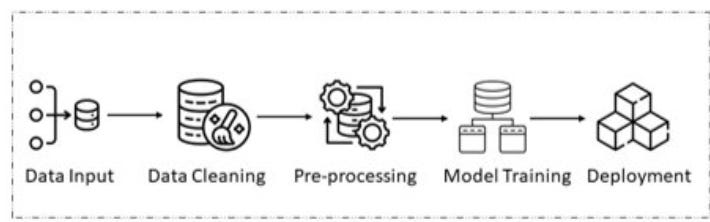

Here’s a simple flow of data in an ML (machine learning) pipeline.

Image Credit: https://www.design-reuse.com/articles/53595/an-overview-of-machine-learning-pipeline-and-its-importance.html

A typical ML pipeline starts with data input, followed by steps for cleaning, preprocessing, training, and ultimately deploying the model. However, this conventional approach has notable drawbacks.

Problems with Common ML Pipelines

As explained by Prof. Ion Stoica (see video link at end of this post), one of Ray’s founders, traditional ML pipelines face several challenges:

Scaling Complexity: Each step (e.g., preprocessing, training, tuning, serving) requires separate scaling on different machines, which is both labor-intensive and inefficient. In fact, we can list sometimes 10 available tools for each of the steps. That’s a lot to learn, a lot to deploy and a lot to scale. Too much work for us ML engineers. :)

Data Transfer Bottlenecks: Moving data between stages necessitates writing and reading from persistent storage, which is slow and cumbersome. What if we didn’t really need to write and read to external storage when going between the steps in the pipeline?

Prebuilt for batch processing, cannot do real-time: due to the above constraints, the scaling issues (which can cause pipelines to run slower to save costs) and the data transfer issues (which also can make things slower) - it is difficult to imagine an ML pipeline running in real-time. But sometimes there is a business need to run everything in real-time, in response to a user request.

What is Ray?

Ray addresses these issues by integrating all stages of the ML pipeline into a single system, written in Python, the language every data scientist knows. According to Robert Nishihara, Anyscale’s CEO (this is Ray’s commercialization company), this eliminates the need to split the pipeline into discrete steps with persistent storage between them. Instead, Ray could use a Linux-like pipe mechanism to pass data directly between stages, enabling real-time data processing and reducing latency. This even reduces the need for using advanced technology like Apache Spark (also founded by Ion Stoica, one of Ray’s founders). Ray is very flexible and can easily scale by simply providing tasks for the backend, which will scale/unscale the resources as needed, thus saving compute resources, but using more when necessary - the best of both worlds.

Basic Premises and Novelty of Ray

Unified Python Code: Ray consolidates all ML pipeline stages into Python code, making distributed computing as simple as writing single-node Python code.

Dynamic Resource Allocation: Ray can dynamically allocate resources, such as launching and shutting down multiple EC2 instances as needed, based on the demands of the pipeline.

Concurrent Execution: By creating a "pipe" between adjacent steps, Ray allows for concurrent execution, significantly speeding up the process.

Conceptual Code Example

Consider this simplified code:

input1 = ...

result1 = func1(input1)

result2 = func2(input1)

func3(result1, result2)Ray can deduce that func1 and func2 have no dependencies and can run in parallel. It will spawn separate instances to execute these functions concurrently. This represents a drastic shift from the traditional step-by-step execution of pipelines, allowing for more flexible, truly distributed systems to operate. However, this powerful capability also demands careful resource management to avoid excessive costs. I am fearful of letting data scientists use this freely, as a small mistake can cost a lot in resource allocation costs.

More complex code than the above (not shown) can show how we can pipe both results into func3. This allows saving storage costs and data transfer costs, reducing cloud runtime costs (depending on use case), and improving pipeline speed significantly (if func3 can start working on the data immediately and not wait for all of it). Truly revolutionary, don’t you think?

Competing Technologies

Several technologies exist in the realm of distributed systems, each with unique strengths:

Kubeflow: Simplifies ML workflow deployment on Kubernetes but focuses on orchestration rather than general distributed computing.

Apache Airflow: Highly flexible for authoring, scheduling, and monitoring workflows but not designed for real-time or large-scale distributed computing.

Dask: Scales Python code from a single machine to a cluster, integrating well with the PyData ecosystem but lacks some advanced features of Ray.

Apache Spark: A powerful analytics engine for big data processing, primarily focused on batch processing, which can be complex to manage.

None of the above offer the flexibility of Ray and the ability to create truly distributed systems, as in the “code” example above. They can all be used for batch processing of workloads, without the important points in which Ray can be optimized, as in the example above.

Origins of Ray

Ray was developed by researchers at the University of California, Berkeley's RISELab, led by Robert Nishihara and Philipp Moritz. The project emerged from the need for a flexible, easy-to-use system for distributed computing, capable of supporting a wide range of applications from large-scale data processing to reinforcement learning. The goal was to create a general-purpose distributed computing platform that was both powerful and user-friendly, addressing the limitations of existing solutions.

In conclusion, Ray offers a revolutionary approach to distributed ML processing, providing seamless integration, dynamic resource allocation, and real-time data processing capabilities. Its unique features make it a compelling alternative to traditional ML pipeline tools, driving efficiency and scalability in machine learning operations.

Links

Ray presented by Robert Nishihara, Anyscale CEO

Anyscale is the company spawned to commercialize Ray, and offers Ray as a cloud service. It is a very insightful and interesting talk.

Robert is a former PhD student in Berkeley’s RISE laboratory, where Ray was developed.

Ray presentation by Ion Stoica

Ion Stoca is a professor at UC Berkeley and one of the Ray cofounders.